|

9/28/2023 0 Comments Python csv writerMake sure you have whitespace between operators and commas: # from this I'll keep them as-is (except for the snake_case change) for the sake of your example.

This depends on your use case, and your columns have those names as well, so maybe it makes sense to you.

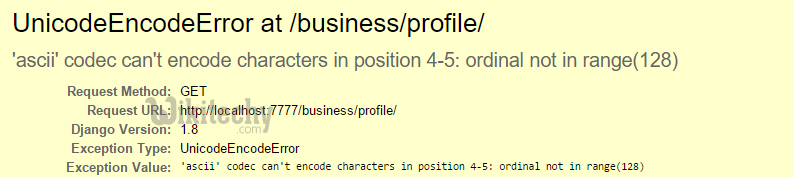

Also, names like var, var1, var666 should usually be swapped out for names a bit more meaningful to make it easier to deduce what values mean. Objects such as classes should have names like SomeClass. Variables and functions should be lowercased and snake-case, some_variable or var. Someone else can show the light of numpy/pandas as it pertains to this example. I will avoid using pandas for now, since it can be really easy to say "pandas will make X faster" and miss out on making what is currently written performant, even though it may be true. Is using the CSV reader/writer really the fastest way to do this? And if so, is there any way I can make my code run faster? to_csv(), but I never managed to improve the runtime more than a second or so. to_csv() function took as long to run as the entire script #1, so it didn't end up being faster. Knowing that line by line iteration is much slower than performing vectorized operations on a pandas dataframe, I thought I could do better, so I wrote a separate script (which I'll call script #2) where all the math was performed in a vectorized fashion, and then I used pandas. For a 2 million row CSV CAN file, it takes about 40 secs to fully run on my work desktop. It basically uses the CSV reader and writer to generate a processed CSV file line by line for each CSV. Writer.writerow(, var, var, var, var4, var5, var6, var7, var8, var9, var10, var11, var12]) Var13 = float((int(row,dataType)<<8)|(int(row,dataType)))ĭate = start_date+datetime.timedelta(seconds=time_s) Writer = csv.writer(csvOutput) #creates the writer object New_filename= trunc_filepath + folders_to_append + '/processed_' + cur_fileĬsvInput = open(filename, 'r') # opens the csv fileĬsvOutput = open(new_filename, 'w', newline='') New_filepath = trunc_filepath + folders_to_append + '/'

Trunc_filepath = '/'.join(filename.split('/')) #create new filename and filepathĬur_file = ''.join(filename.split('/')) #pulls filename of current fileįolders_to_append = '/Log Files-Processed/' + '/'.join(flist.split('/')) #folders to append to new filepath I wrote a script to parse these files that looks like this: import osįilenames = filedialog.askopenfiles(title="Select. I need to decode the hex, and then extract a bunch of different variables from it depending on the CAN ID.

id Flags DLC Data0 Data1 Data2 Data3 Data4 Data5 Data6 Data7 The files have 9 columns of interest (1 ID and 7 data fields), have about 1-2 million rows, and are encoded in hex. I'm currently working on a project that requires me to parse a a few hundred CSV CAN files at the time. I'm fairly new to python and pandas but trying to get better with it for parsing and processing large data files.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed